Hi all,

in my workflow I am computing the limits by throwing toys with

root -l -b -q StandardHypoTestInvDemo.C > /dev/null

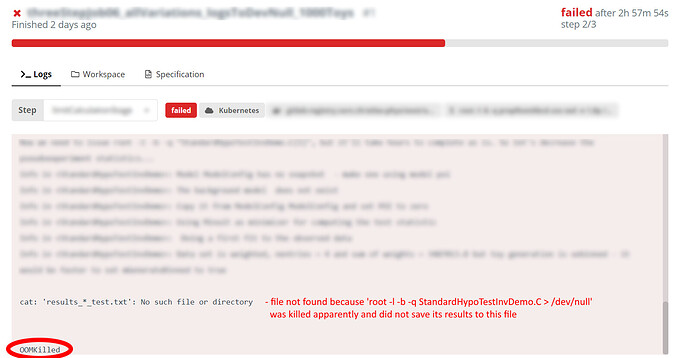

. I noticed that when I set the number of toys in this macro to 500, the job runs fine and produces an output file in about two hours. If I however set N toys to 1000, the job runs for three hours and (from the looks of it) gets killed, and the execution jumps to the next line in the script:. And I see the OOMKilled in the last line in the log. I guess I’m hitting some sort of limit: time or memory. When I run on HTCondor (regular submission, nothing to do with Docker or yadage or anything), setting the N toys even to 20000 does not lead to any issues. So how can I understand the reason for REANA killing the job?

Link to the problematic job: https://reana.cern.ch/details/056b3b5e-30f6-47b8-a5dc-e717b6960cb0, check out the log from the last step.

Thanks!

A screenshot to demonstrate the issue:

Hi @ysmirnov, OOMKIlled means indeed that the job was killed due to getting out-of-memory limit. Currently, REANA nodes allow about 6GiB of memory consumption per job. Do you need more than that?

There are basically two solutions:

-

either decrease the amount of RAM consumption in your job: is there something that is perhaps not necessary or that can be garbage-collected sooner?

-

or amend REANA to allow more RAM per job: we plan to introduce a workflow hint that would allow researchers to set thir own memory limit up to about 12 GiB per job.

Would one of these do? How much memory does your job require for those bigger Ns to run on a batch farm or HTCondor?

Hi Tibor,

thanks for your reply. The problem apparently boils down to the fact that when I ran on HTCondor, I used ROOT 6.14/04, while my Docker image I use to run on REANA uses ROOT 6.20/06. And the memory consumption of a job is very different between the two versions. I’m discussing it with ROOT experts here. I’ll probably have to move to ROOT 6.14/04 for the REANA jobs, too, thus decreasing the consumed memory for the 1000-toy case from 6.9 Gb to just 1.8 Gb.

Thanks, interesting observations! If you happen to need only pure ROOT with RooFit, then you may perhaps try with our reanahub/reana-env-root6:6.18.04 docker image, just to see how 6.18 behaves…